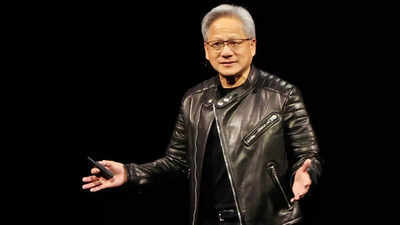

Jensen Huang built Nvidia into a $4.5 trillion empire on a deceptively simple premise: one chip, every workload, everywhere. For years, it worked spectacularly—CUDA locked in developers, GPUs became the default backbone of the AI boom, and rivals barely registered. Nvidia commanded over 90% of the AI accelerator market, posted 75% gross margins, and watched its stock climb to heights that made it the most valuable company on the planet. But the AI hardware market is shifting in ways that Nvidia can no longer afford to ignore, and Huang’s decision to unveil a brand new inference-focused chip at next week’s GTC developer conference—the first product from December’s $20 billion Groq acquisition—is the clearest signal yet that even he knows the old playbook has limits.The trigger is hard to miss. Customers are quietly shopping elsewhere, billions in market value are evaporating in single sessions, and the companies that once queued up to buy Nvidia’s GPUs are now building serious alternatives of their own. Google, Microsoft, Amazon, and Meta have all announced purpose-built AI chips in recent months—each one explicitly benchmarked against Nvidia, and each one pitched as meaningfully cheaper to run at scale.

Huang’s ‘one chip fits all’ era is quietly coming to an end

The core of Nvidia’s dominance has always been CUDA—its proprietary software ecosystem that ties developers to its hardware. But as AI workloads shift increasingly toward inference, the economics are turning against Nvidia. Bank of America analysts estimate inference will account for 75% of AI data center spending by 2030, up from around 50% last year. Purpose-built chips from Google, Microsoft, Amazon, and now Meta are specifically designed for exactly that—and they’re significantly cheaper to run at scale.Google’s Ironwood TPU, for instance, reportedly delivers a total cost of ownership roughly 30-44% lower than Nvidia’s equivalent GB200 Blackwell server. Microsoft’s newly announced Maia 200, built on TSMC’s 3nm process, claims 30% better performance per dollar than its previous generation—and explicitly benchmarks itself as outperforming Nvidia’s seventh-generation TPU on FP8 tasks. Meta, meanwhile, revealed four new in-house MTIA chips this week alone, with a new generation shipping roughly every six months.

Nvidia lost $250 billion in a single session when Meta’s TPU talks surfaced

The market is already pricing in the shift. When reports emerged that Meta—one of Nvidia’s biggest customers, planning up to $72 billion in AI infrastructure spending this year—was exploring Google’s TPUs for its data centers, Nvidia stock dropped over 6% in a single session, erasing around $250 billion in market value. Alphabet climbed 4%. Broadcom, which manufactures Google’s chips, jumped 11%.Nvidia’s public response was unusually defensive. “Nvidia is a generation ahead of the industry—it’s the only platform that runs every AI model and does it everywhere computing is done,” the company posted on X. That’s technically true. But “runs every model” increasingly matters less than “runs the right models cheaply.”

The new chip landscape increasingly favors purpose-built alternatives

The FT notes that Groq’s LPU—now being absorbed into Nvidia’s product line—uses SRAM rather than the expensive high-bandwidth memory that powers Nvidia’s flagship chips. HBM is increasingly in short supply, with SK Hynix and Micron struggling to keep up with demand. A Groq-derived chip sidesteps that bottleneck entirely.Still, Nvidia isn’t done. SemiAnalysis maintains that Google, Amazon, and Nvidia will all “sell lots of chips” in the future—the market is growing fast enough for multiple winners. But pricing power, once Nvidia’s greatest strength, is clearly under threat. And Jensen Huang, by finally acknowledging that inference needs its own dedicated hardware, has effectively confirmed what rivals have been arguing for years.